[Russian version is in progress now, will be published ASAP]

Hello, CodeForces.

I think that matroids are beautiful and powerful concept, however, not really well known in competitive programming.

I’ve discovered matroids at 2019 Petrozavodsk Winter Training Camp. There was a problem that clearly cannot be solved using usual techniques I knew, editorial for this problem was just these three words “just matroid intersection”. Back then it took me more than 2 days of upsolving to find all the information and details I need and implement solution that gets AC on this. And it took way longer to actually understand why does it work and exactly how does it work. (I still hesitate in some details.)

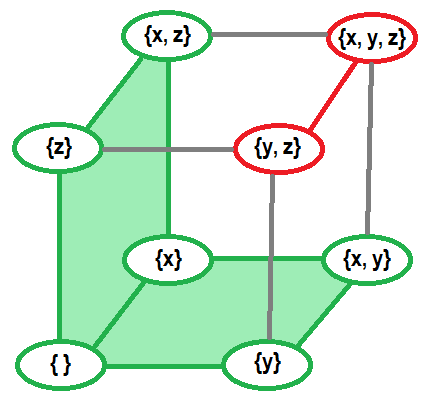

Of course, it is not hard to google up all the definitions and some related articles, but in my opinion they all are focused more on mathematical part of theory, strict proofs in some not really obvious but short ways, and observing only key points in whole theory, omitting a lot of intermediate results, logical steps and examples. In some article I’ve encountered this heavy-formula proof:

That was nowhere close to being clear for me. (Probably I’m not mathematician enough, yet.) But still, I’m sure there is a way to make this more clear and detailed.

I am sure, there are a lot of people in CP who will also like to get familiar with matroids. So, I’ve decided to review this topic from very beginning focusing more on explanations, logical steps and providing more examples. This is the goal of this article, and you do not need to know anything about matroids beforehand. .

Prerequisites. You are not required to know well everything listed here, but it is good to be familiar with some of these concepts for better and faster understanding:

- Basics of set theory and set operations

- Spanning trees in graphs

- Matchings in bipartite graphs

- Linear independence in vector spaces

- Rank and basis of set of vectors

- Gaussian elimination

- Kruskal’s minimum spanning tree algorithm

- Kuhn’s bipartite matching algorithm

- Enjoying discrete math

All the information that used in this article is my own understanding of this topic, that I’ve learned from other articles found on the web. And as we know, proof by passing various tests is not a proper proof. So if you find something illogical or wrong, that should mean that I’ve made a mistake or misinterpret something, please point it out.

What matroid is?

Matroid is a structure that abstracts and generalizes the notion of linear independence in vector spaces.

Here goes the formal definition. Matroid is a pair $$$\left\langle X, I \right\rangle$$$ where $$$X$$$ is called ground set and $$$I$$$ is set of all independent subsets of $$$X$$$. In other words matroid $$$\left\langle X, I \right\rangle$$$ gives a classification for each subset of $$$X$$$ to be either independent or dependent (included in $$$I$$$ or not included in $$$I$$$).

Of course, we are not speaking about arbitrary classifications. These $$$3$$$ properties must hold for any matroid:

- Empty set is independent.

- Any subset of independent set is independent.

- If independent set $$$A$$$ has smaller size than independent set $$$B$$$, there exist at least one element in $$$B$$$ that can be added into $$$A$$$ without loss of independency.

These are axiomatic properties of matroid. To prove that we are dealing with matroid, we generally have to prove these three properties.

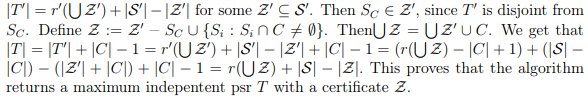

For example, explicit presentation of matroid on ground set $$$\{x, y, z\}$$$ which considers $$$\{y, z\}$$$ and $$$\{x, y, x\}$$$ to be dependent and marked red. Other sets are independent and marked green.

From this definition we can derive some other important concepts (examples for all these concepts will be shown later, along with examples of matroids):

Bases. Any independent set of maximum size is a basis of given matroid. In other words there is not element that can be added to a basis without loss of independency. All bases have equal size (otherwise we can add something to smaller basis from greater basis by third axiomatic property). Directly from previous, no basis is a subset of other basis. Any independent set is a subset of some basis (by third property we can continue increasing its size until reaching some basis), so it is enough to only know about all bases of matroid to completely describe it.

Circuits. Dependent set is a circuit if all subsets (excluding whole set) of this set are independent. In other words, circuit is dependent set, that does not allow removing any of its elements without gaining independence. No circuit is a subset of another circuit (otherwise we can remove some elements from greater circuit without removing dependence).

Another important property of circuits, symmetric difference of two different circuits always contain a circuit. Symmetric difference of two sets contains all elements that are included only in one of the sets (formally, $$$A \Delta B = (A \setminus B) \cup (B \setminus A)$$$). This property is related to cycle spaces, in case of matroids this can be generalized to “circuit spaces”.

Ranks. Ranking function. Rank of matroid is size of its bases. But we can directly refer it as size of the basis, why would we need specific definition for such thing? Yes, this is not really a flexible thing (we can refer it as size of basis), to make it more flexible we can define ranking function $$$r(S)$$$ that tells maximum size of independent subset for some $$$S$$$, which is subset of ground set. Value of ranking function on some subset can be referred as rank of this subset in matroid. This information about subsets can be very helpful in many situations. Some properties of ranking functions:

- Rank of subset cannot be greater than size of this subset.

- Rank of independent subset is size of this subset.

- Rank of whole ground set is equal to rank of matroid. Rank of empty set is $$$0$$$.

- Rank of any subset of set $$$S$$$ is not greater than rank of $$$S$$$.

- Including $$$1$$$ element into set can only increase its rank by $$$1$$$ or leave it unchanged. (formally, for some set $$$S$$$ and element $$$x \notin S$$$ we have $$$r(S) \leq r(S \cup \{x\}) \leq r(S)+1$$$)

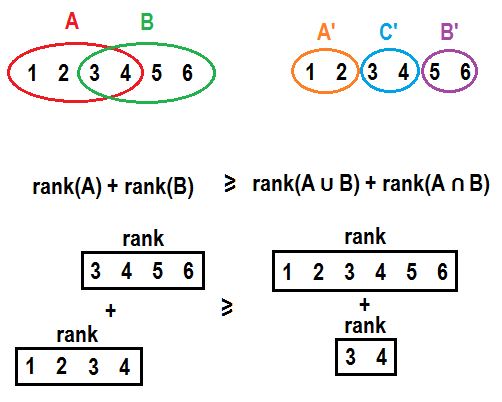

- Ranking function of matroid is submodular. If we have two sets $$$A$$$ and $$$B$$$, moving all elements that are present in $$$B$$$ but not present in $$$A$$$ from $$$B$$$ to $$$A$$$ will not improve sum of ranks of both sets. Formally, $$$r(A) + r(B) \geq r(A \cup B) + r(A \cap B)$$$. In general element will not contribute more to a rank if it is moved to a set with additional elements. This might get more clear if explicitly split $$$A$$$ and $$$B$$$ into $$$A’ = A \setminus B$$$, $$$C’ = A \cap B$$$ and $$$B’ = B \setminus A$$$ and write it in a form $$$r(A’ \cup C’) + r(B’ \cup C’) \geq r(A’ \cup B’ \cup C’) + r(C’)$$$. See pictures below for example:

Speaking informally, principle “it is better not to puts many eggs into one basket” works here.

Independence oracle. Speaking about classifications of subsets, we usually cannot afford storing it as some information about each subset (it will result in exponential memory consumption). We need some other tools to test subsets for independence. If matroid is defined as a consequence of relations among elements in some other structure, we usually (probably, always?) can find algorithm with polynomial running time to test some subset of elements for independence. As we would like to abstract concept of matroids and use them regardless of their underlying independence mechanism, we can define independence oracle as subroutine that tests some subset for independence. And we can measure running time of some algorithms as the number of oracle calls. (However, in practice we cannot completely forget about this, because we will miss great opportunities to optimize related algorithms.)

Examples

There are matroids of different types. There are some examples:

Uniform matroid. Matroid that considers subset $$$S$$$ independent if size of $$$S$$$ is not greater than some constant $$$k$$$ ($$$|S| \leq k$$$). Simplest one, this matroid does not really distinguish elements of ground set in any form, it only cares about number of taken elements. All subsets of size $$$k$$$ are bases for this matroid, all subsets of size $$$(k+1)$$$ are circuits for this matroid. We can also define some specific cases of uniform matroid:

- Trivial matroid. $$$k = 0$$$. Only empty set is considered independent, any element of ground set is considered dependent (any combination of ground set elements is also considered dependent as a consequence).

- Complete matroid. $$$k = |X|$$$. All subsets are considered independent including complete ground set itself.

Linear (algebra) matroid. Ground set consists of vectors of some vector space. Set of vectors is considered independent if it is linearly independent (no vector can be expressed as linear combination of other vectors from that set). This is the matroid from which whole matroid theory originates from. Linear bases of vector set are bases of matroid. Any circuit of this matroid is set of vectors, where each vector can be expressed as combination of all other vectors, but this combination involves all other vectors in circuit. Independence oracle for this matroid can be based on gaussian elimination.

Colorful matroid. Ground set consists of colored elements. Each element has exactly one color. Set of elements is independent if no pair of included elements share a color. Rank of a set is amount of different colors included into a set. Bases of this matroid are sets that have exactly one element of each color. Circuits of this matroid are all possible pairs of elements of the same color. Colors can be enumerated with integers for practical purposes.

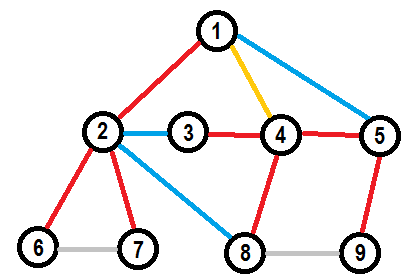

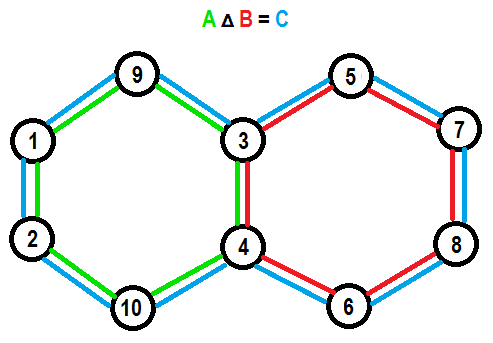

Graphic matroid. This matroid is defined on edges of some undirected graph. Set of edges is independent if it does not contain a cycle. This type of matroids is the greatest one to show some visual examples, because it can include dependent subsets of a large size and can be represented on a picture at the same time. If graph is connected then any basis of this graph is just a spanning tree of this graph. If graph is not connected then basis is a forest of spanning trees that include one spanning tree for each connected component. Circuits are simple loops of this graph. Independence oracle for this matroid type can be implemented with DFS, BFS (start from each vertex in graph and check that no edge connect a vertex with already visited one) or DSU (keep connected components, start with disjoint vertices, join by all edges and ensure that each edge connected different components upon addition). Here is an example of circuit combinations property in graphic matroid:

Truncated matroid. We can limit rank of any matroid by some number $$$k$$$ without breaking matroid properties. For example, base of truncated colorful matroid is set of elements that include no more than $$$k$$$ different colors and all colors are unique. Base of truncated graphic matroid is acyclic set of edges that leaves at least $$$(n-k)$$$ connected components in the graph (where $$$n$$$ is amount if vertices in a graph). This is possible because third matroid property does not only refer to bases of matroid, but to any independent set in matroid, and when all independent sets with sizes greater than $$$k$$$ are simultaneously removed, independent sets of size $$$k$$$ become new bases and for any lesser independent set can still find elements from each basis that can be added.

Matroid on a subset of ground set. We can limit ground set of matroid to its subset without breaking matroid properties. This is possible because rules of dependence does not rely on specific elements being in ground set. If we remove an edge from a graph, we will still have a valid graph. If we remove an element from set (of vectors or colored elements) we will still get a valid set of some element of the same type, and rules will preserve. Now, we can also define rank of subset in matroid as a rank of matroid on a ground set limited to this subset.

Expanded matroid. Direct matroid sum. We can consider two matroids as one big matroid without any difficulties if elements of ground set of first matroid does not affect independence, neither intersect with elements of ground set of second matroid and vise versa. Think of two graphic matroids on two connected graphs. We can unite their graphs together resulting in graph with two connected components, but it is clear that including some edges in one component have no effect on other component. This is called direct matroid sum. Formally, $$$M_1 = \left\langle X_1, I_1 \right\rangle, M_2 = \left\langle X_2, I_2 \right\rangle, M_1 + M_2 = \left\langle X_1 \cup X_2, I_1 \times I_2 \right\rangle$$$, where $$$\times$$$ means cartesian product of two sets. You can unite as many matroids of as many different types without restrictions as you want (if you can find some use for the result).

This is not the only types of matroids. You can find more information about useful matroid types or even create your own.

Rado-Edmonds algorithm

Now, speaking about practical purposes of matroids. We have the following problem: we need to find a basis in matroid $$$M = \left\langle X, I \right\rangle$$$. How do we approach this problem efficiently?

According to third matroid property, if we have some independent set $$$S$$$ which size is less than size of a basis we can find some element $$$x$$$ that can be added to $$$S$$$ without loss of independence. So, we can start with empty set that is guaranteed to be independent and add elements one-by-one performing a linear scan over ground set to find next element to add. If we denote $$$n$$$ as a size of ground set $$$X$$$ and $$$r$$$ as a rank of $$$M$$$ we have algorithm that runs in $$$\mathcal{O}(r \cdot n)$$$ oracle calls.

But we can do it better, notice that if some element $$$x$$$ wasn’t added into $$$S$$$ on some step it will never be possible to include it on any future step, because if $$$S \cup \{x\}$$$ was considered dependent on some step it includes some circuit $$$C$$$ and we never exclude elements in this algorithm, so any future expanded version of $$$S$$$ will also confirm $$$S \cup \{x\}$$$ to include some circuit $$$C$$$ and be dependent. So we can find a basis in one single scan, if we will take elements greedily (include element into $$$S$$$ if it does not break independence of $$$S$$$ at the step). So, we have improved runtime to $$$\mathcal{O}(n)$$$ oracle calls.

But, what about weighted case? Let each element $$$x$$$ of the ground set have some weight $$$w(x)$$$. We want to find a basis in our matroid with minimal sum of weights.

You probably know about Kruskal’s minimum spanning tree algorithm, which actually solves the problem for graphic matroid, but how do you prove it without recalling some of matroid properties?

Keeping in mind greedy ideas, we can try to connect results of $$$k$$$-th and $$$(k+1)$$$-th steps of algorithm to show something. Let’s assume that $$$A$$$ is the minimum weight independent set of size $$$k$$$ and $$$B$$$ is minimum weight independent set of size $$$(k+1)$$$. By third matroid property, we have at least one element $$$y$$$ in $$$B$$$ that can be added into $$$A$$$ without loss of independency. So, we have sets $$$B \setminus \{y\}$$$ of size $$$k$$$ and $$$A \cup \{y\}$$$ of size $$$(k+1)$$$. As we know what are minimum weighted sets, we can write down:

$$$A \leq B \setminus \{y\}$$$

$$$B \leq A \cup \{y\}$$$

we add $$$y$$$ to both sets in first inequality and get:

$$$A \cup \{y\} \leq (B \setminus \{y\}) \cup \{y\}$$$

$$$A \cup \{y\} \leq B$$$

we can see that

$$$B \leq A \cup \{y\} \leq B$$$

which means

$$$B = A \cup \{y\}$$$

In other words, we can get minimum weight independent set of size $$$(k+1)$$$ from minimum weight independent set of size $$$k$$$ by including some element $$$y$$$ into it which will not break independence. What if we have multiple of such elements $$$y$$$? Greedy answer, $$$y$$$ with minimum weight.

Combining this with previous result we can present algorithm that will find basis with minimum sum of weights:

- Sort elements of ground set by ascending weight

- Start with empty set $$$S$$$

- Iterate elements of ground set in sorted order, at each step include current element into $$$S$$$ if it does not make $$$S$$$ dependent

Running time is $$$\mathcal{O}(n \cdot log(n))$$$ unit operations for sort plus $$$\mathcal{O}(n)$$$ oracle calls.

This is Rado-Edmonds algorithm. As an example for specific matroid type we have Kruskal’s minimum spanning tree algorithm, mentioned above.

If finding a basis of any matroid is done greedily and matroids are about generalizing things, probably we can prove that all greedy problems can be reduced to finding a base of some matroid? Well, no.

In greedy solutions we can sometimes not only sort elements, but also create additional elements in process or use some specific comparators or skip / take conditions. Even if we forget about weights and put some additional constraints, we can only use matroids in some specific cases. In general, if you want to prove that matroids are applicable here, you can focus on proving axiomatic properties.

Matroid intersection

And you may ask “Why would we ever need all these generalizations, if it does not invent anything new, anything that is not covered with other more situational and problem-specific algorithms?” Well, there are some problems that are extremely hard (in my opinion) to solve without using these generalizations. One of this problems is the problem of matroid intersection.

More detailed, this problem should be called “finding largest common independent set in intersection of two matroids”. Formally, we are given two matroids $$$M_1 = \left\langle X, I_1 \right\rangle$$$ and $$$M_2 = \left\langle X, I_2 \right\rangle$$$ and we want to find any set $$$S$$$ with $$$\max\limits_{S \in I_1 \cap I_2} |S|$$$. In other words largest possible $$$S$$$ that is considered independent by both matroids.

We can show multiple examples of such problems. I mostly like these:

Colorful spanning tree. You are given a graph and a color for each edge in that graph. You have to find spanning tree of this graph that has no more than one edge of each color. Clearly, matroid classifications are “set of edges contains no more than two edges of a same color” and “set of edges contains no cycles”.

Colorful linear basis. You are given a set of vectors in some vector space and a color for each given vector. You have to find a basis of given set of vectors that includes no more than one vector of each color. Here we go with linear independence on one and unique colors on the other.

Bipartite graph maximum matching. Well known problem, you are given bipartite graph find subset of edges of maximum size such that no pair of edges from this subset share a vertex. Might not be obvious, what should we do here, but think of easier version of the problem: replace “no pair share a vertex” with ”no pair share a vertex from the left part”. Now we can easily solve that problem with picking one edge for each vertex on a left part with non-zero power, also we can show that it can be done greedily and it forms a proper matroid. And as we have two parts in given graph, we can obtain second matroid for the right part symmetrically and solve intersection problem.

Wait, but why exactly two matroids? What about intersecting more? Well, that significantly expands the number of problems that can be reduced to intersection of multiple matroids. In fact this stuff becomes general enough to offer a solution for Hamiltonian path problem. Given directed graph, intersect these three matroids on ground set of edges of a graph:

- Ensure each vertex has at most $$$1$$$ outcoming power (can be represented as colorful matroid, paint edges that come out of the same vertex into one color)

- Ensure each vertex has at most $$$1$$$ incoming power (just as previous one)

- Ensure there is no loops (forget about edges direction and check that edges form a forest of spanning trees)

And… it is NP-complete. Which means we have no idea how to solve it in polynomial time.

So, returning to two matroids. How can we approach this problems? Bruteforce exponential solution is pretty obvious (check all the sets from either $$$I_1$$$ or $$$I_2$$$). Difficulties start from the point that $$$\left\langle X, I_1 \cap I_2 \right\rangle$$$ is not guaranteed to form a proper matroid. As we know finding a basis of any matroid can be done greedily and, well, randomly picking edges into a matching is not a great way to solve it.

Probably, you know about Kuhn’s bipartite matching algorithm and using the fact that these problems are similar you may somehow come to a general solution.

We need some observations here. Consider single matroid $$$M = \left\langle X, I \right\rangle$$$ and some basis $$$B$$$ in it (and this basis is not unique, otherwise that would be a trivial case). We can always find some element $$$p$$$ that can be excluded from $$$B$$$ and some other element $$$q$$$ can be included in $$$B$$$ instead, so that it will not break independence (formally, $$$B \setminus \{p\} \cup \{q\} \in I$$$).

For example, considering $$$M$$$ is graphic matroid of the following graph, red and yellow edges form a spanning tree, which is a basis of $$$M$$$.